What is the problem?

OpenAI received $1 billion from Microsoft and developed ChatGPT, an artificial intelligence chatbot capable of understanding human speech and generating surprisingly detailed human-written text. This is the latest evolution of the GPT, or Generative Pre-Trained Transformer, a family of text-generating artificial intelligence.

It would seem that this is only entertainment. But ChatGPT can write works and poems, make predictions, solve mathematical problems, write code, and answer questions, which is far from its talents' complete list. The neural network gained more than a million users in the first five days after developers opened access to it. At the same time, Google CEO Sundar Pichai has already called on employees of various divisions to throw their forces into the fight against the threat that ChatGPT poses to the search engine. But this is not the main problem. At least now, for Ukrainians, there is something worse than the race between Google and other companies.

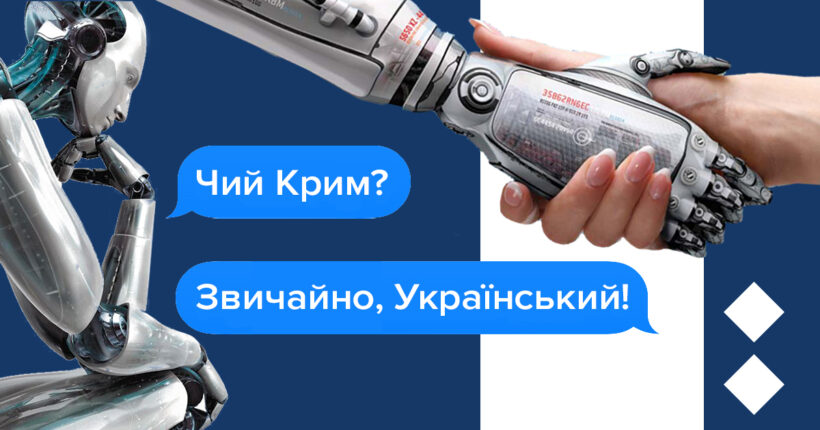

ChatGPT believes russian propaganda

The founders of OpenAI are in no hurry to share their findings with the technical community. Any innovations unique to ChatGPT are confidential, and what AI (artificial intelligence) algorithms it uses are unknown.

The first to draw attention to the problem and publicize it was Tymofiy Mylovanov, head of the supervisory board of Ukroboronprom. To his question, "whose is Crimea?" the bot did not say "Ukraine." It answered, "the territory is disputed," "some countries consider it Ukraine, and some — russia," and "it is actually controlled by russia, which considers it theirs." In short, this is what Mylovanov writes in the post:

- The ChatGPT chatbot refuses to name the cause of the war in Ukraine and calls the war a war. It talks about the Maidan and about "many different political, historical and economic reasons," "as well as the influence of various international players" (that is, the bot hints at a proxy war).

- The ChatGPT chatbot creates texts based on all material from the Internet, including propagandistic russian news and articles, later weaving russian narratives into new materials.

Before that, there were already whole arrays of texts created by artificial intelligence. Some of them can be used in the media as full-fledged journalistic materials. A prime example is the CNET Money Staff, which has published about 70 articles explaining what Zelle is and how to set up autopay for credit cards. As Mediamaker writes, referring to the online marketer Gael Breton thread, the CNET Money Staff team began experimenting with AI in November. They are trying to determine if there is a pragmatic case for using AI to help with basic explanations of financial services topics.

The Ukrainian community was not left out. Politicians, software engineers, and scientists immediately started looking for a solution to this problem. Here's what they offer.

What is the solution?

Solutions for bureaucracy

AI-generated texts must be labeled

Vasyl Zadvorny, CEO of Prozorro SE, has 10 years of experience in the IT industry. Under the leadership of Vasyl Zadvorny, the former state-owned enterprise "Zovnishtorgvydav Ukraine" was successfully transformed into a state-owned IT company that administers and develops an electronic public procurement system.

Among the most "obvious" solutions that Zadvorny sees is the introduction of strict regulation of chatbot work. That is, it is necessary to introduce an obligation for ChatGPT to notice AI-generated texts in major media, particularly in social networks.

"This requires extensive lobbying, but as far as I understand, both the EU and the US are already working on it. Cons: it won't happen quickly," Zadvorny comments.

It also suggests flagging chatbot-generated texts as "possibly containing discriminatory text." This requirement should apply to major media outlets that publish chatbot-generated content. This is not a new solution. When the Covid-pandemic was the world's main problem, and many divergent opinions about the virus appeared on the network, a similar approach was already used for Covid-related topics.

Solutions for content creators

More high-quality and truthful content about Ukraine for a broad audience

Trained by artificial intelligence and machine learning, the system is designed to provide information and answer questions through a conversational interface. Artificial intelligence learns from a huge sample of text taken from the Internet. According to some specialists, this is precisely the main problem with AI: it reinforces existing distortions and biases. That is why the "base" on which it studies is important. Doctor of Philosophy, postdoc of the Royal Institute of Technology in Stockholm Oleksiy Pasichny explains:

"Garbage in, garbage out, whatever you feed the model, it gives out. The best thing to do is to promote the Ukrainian language and Ukrainian narratives in the most influential sources (because there is probably a part of the algorithm like pagerank). For example, if all the lectures with distinguished guests given by KSE this year will be transcribed and made publicly available by their authorship."

Nadiya Babynska-Virna, an open data specialist, agrees with him in this opinion. She believes journalists need to publish more quality content in English about Ukraine.

By the way, Rubryka has already joined the implementation of such a solution, having launched an English-language version of the site even before the start of a full-scale war. From there, you can distribute our materials to an English-speaking audience as well.

Solutions for those who care

Insist on transparency of chatbot algorithms

In the beginning, the guiding principle behind the creation of AI was that we could not trust commercial companies to develop increasingly powerful artificial intelligence. OpenAI used to be an independent research foundation, but in 2019 OpenAI turned into a commercial company (remember a billion dollars from Microsoft) to scale and compete with the tech giants. The company also continues to be financed by Elon Musk, whose statements about russia's war against Ukraine outrage a conscious part of society.

Open data specialist Nadiya Babynska-Virna believes that AI algorithms should become open, and it is necessary to conduct a corresponding campaign, and for this:

- communicate with Microsoft and other OpenAI sponsors;

- give the issue of engagement publicity by telling how it hurts the world.

Here's what you can do for this personally:

Step 1: Leave requests and complaints.

You can send requests and complaints to the developers so that they adjust and fix the algorithms and pay more attention to training AI on real sources. Usually, such companies request to report similar cases "to remove flaws from the model."

This can be done on the OpenAI website, but only for those who are abroad: chatbot access is still closed for Ukraine.

Step 2: Update information on public resources.

Software engineer Serhii Korsunenko noticed that artificial intelligence provides some answers, taking information from publicly available resources considered the trustworthy source of information.

He noticed that ChatGPT's answer to the question "What is the reason for russia's war against Ukraine" is very close in content to the Wikipedia article.

"It is necessary to add truthful and clearly stated information to Wikipedia in English, russian and Ukrainian languages. After another retraining, the chatbot will give relevant answers."

Newsletter

Digest of the most interesting news: just about the main thing